Our paper on deep saliency prediction will appear in CVPR 2023

© 2023 EPFL

The paper "TempSAL-Uncovering Temporal Information for Deep Saliency Prediction" by Bahar Aydemir, Ludo Hoffstetter, Tong Zhang, Mathieu Salzmann, and Sabine Süsstrunk is accepted to CVPR2023.

IVRL member Bahar Aydemir will present her work at the Computer Vision and Pattern Recognition conference taking place in Vancouver, Canada from June 18 to June 22, 2023.

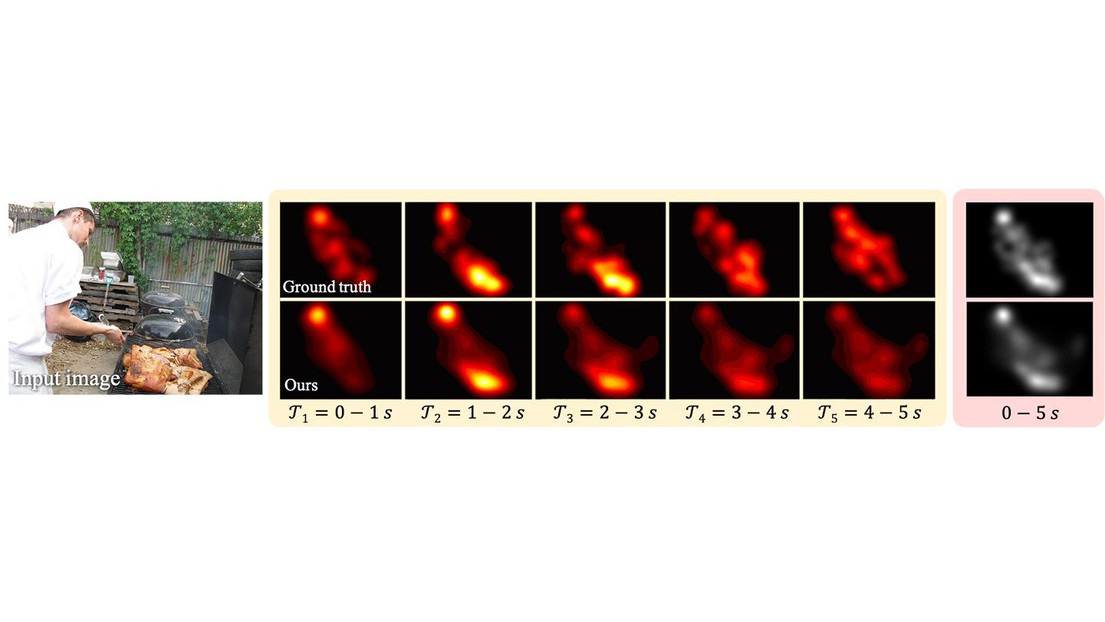

Deep saliency prediction algorithms complement the object recognition features, they typically rely on additional information, such as scene context, semantic relationships, gaze direction, and object dissimilarity. However, none of these models consider the temporal nature of gaze shifts during image observation. We introduce a novel saliency prediction model that learns to output saliency maps in sequential time intervals by exploiting human temporal attention patterns. Our approach locally modulates the saliency predictions by combining the learned temporal maps. Our experiments show that our method outperforms the state-of-the-art models, including a multi-duration saliency model, on the SALICON benchmark