In a new book, IC scientists draft a roadmap for beneficial AI

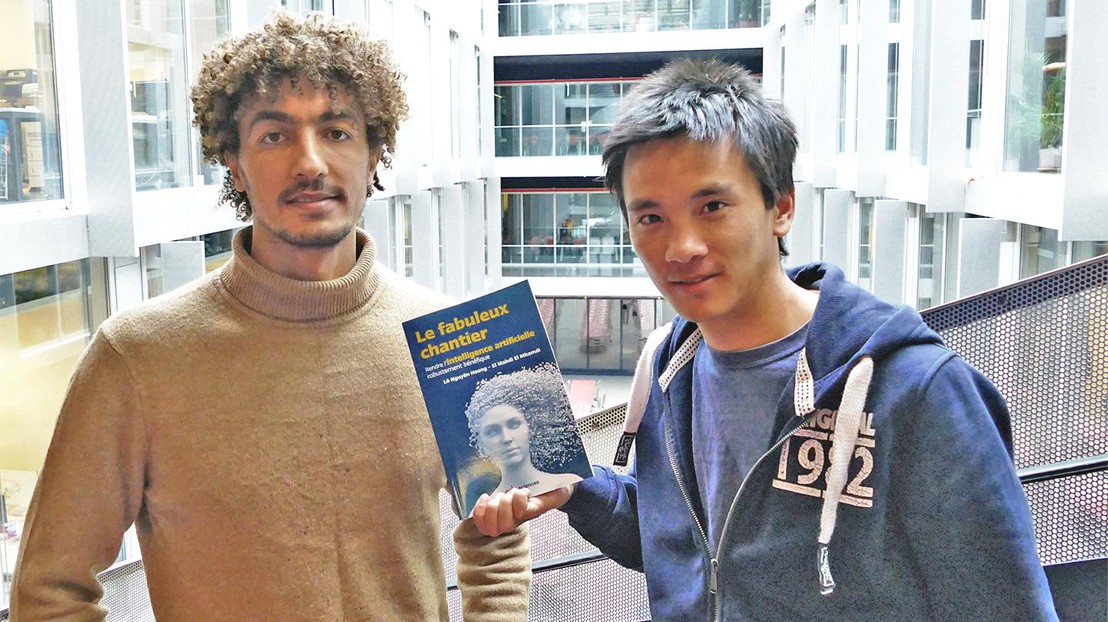

El Mahdi El Mhamdi and Lê Nguyên Hoang © 2019 IC/EPFL

Conversations about the risks of artificial intelligence often focus on the tech of tomorrow (sentient robots?) gone awry. But School of Computer and Communication Sciences (IC) researchers Lê Nguyên Hoang and El Mahdi El Mhamdi argue that there is an urgent need to address risks posed by the algorithms that already influence our daily activities – from reading the news to watching YouTube.

In their recently published book, “The fabulous endeavor: making AI robustly beneficial” (« Le fabuleux chantier: rendre l’intelligence artificielle robustement bénéfique »), Hoang and El Mhamdi call for ethical questions to be restated in computational terms – something they argue is lacking in both computer science and ethics research.

“There are many ethical questions in AI that are also technical. But we often hear that these are dilemmas for ethicists to solve, while ethicists will tell you that these are computer science questions,” explains El Mhamdi.

“Philosophy with a deadline”

In their book, El Mhamdi and Hoang use their expertise in machine learning systems and mathematics to provide a conceptual understanding of key algorithms for a broad audience.

One point they emphasize is the urgent need for “robustly beneficial” AI, not just for future technologies, but for the algorithms we already use. They explain how under-researched limitations and vulnerabilities of these algorithms can result in unintended side effects, posing serious risks to the human activities they impact – from communication and commerce to entertainment and politics.

“We don’t need to invoke far-fetched, sophisticated AI to talk about the urgency of AI safety,” El Mhamdi says. “For example, people worry about AI that cannot be switched off, but if you think about it, we already have millions of people unable to turn off their smartphones, because app algorithms are learning how to keep users attached to them.”

In the absence of ethical guidance, they argue, algorithms make millions of split-second decisions every day about what kinds of content to show and promote, potentially leading to the spread of hate speech, fake news, propaganda, gender bias, and other problems. Quoting University of Oxford philosopher Nick Bostrom, Hoang and El Mhamdi refer to this urgent need as “philosophy with a deadline.”

“Every day that goes by without an ethical framework in place for algorithms is another day that people are exposed to these kinds of problematic content,” Hoang says.

The trouble with YouTube

One of the authors’ most eye-opening examples is YouTube, which counts more video views per minute than Google searches. YouTube uses recommender algorithms to select and display videos for users; as a result, some 70% of all videos watched on the site are clicked based on an algorithmic recommendation, rather than a direct search. This means that these algorithms can have a staggering amount of influence over what people see, consume, and think.

“Recommender systems have vulnerabilities, meaning malicious hackers can take advantage of them to promote undesirable ideas like fake news,” El Mhamdi says.

The authors discuss how to make these systems more robust and resilient to single points of failure, highlighting distribution and decentralization as key recommendations. They point out that because it’s so difficult to re-aggregate decentralized data efficiently and securely, these challenges create fertile research areas for data scientists and statisticians as well as computer scientists.

Hoang adds that these kinds of questions lay the foundation for an emerging research field that has particular potential for early-career computer scientists, as well as those in other fields like sociology and psychology.

“Many computer science scholars today are focusing on performance, and that’s good, but these computational ethics problems are not only more urgent – they are also extremely challenging and fascinating,” he says.

« Le fabuleux chantier: rendre l’intelligence artificielle robustement bénéfique » is currently available in French from EDP Sciences. An English edition will be published in 2020.

View a video of the book launch at EPFL on November 28, 2019.

El Mahdi El Mhamdi studies the robustness of biological networks and distributed machine learning systems, and recently completed his PhD in the Distributed Computing Laboratory (DCL) led by Rachid Guerraoui. Lê Nguyên Hoang is a trained mathematician and science communicator. He runs ZettaBytes, a YouTube channel about IC research, and is the creator of the popular science communication channel, Science4All. He is also the author of the book, « La formule du savoir : une philosophie unifiée du savoir fondée sur le théorème de Bayes » (”The knowledge formula: a unified philosophy of knowledge based on Bayes’ Theorem”)